Data Strategy

The Hidden Problem in Your Data Pipeline

YTVidHub can download many subtitle languages, but data quality is still the deciding factor for reliable downstream use.

By YTVidHub Engineering | Last reviewed Nov 2025

Quick Risk Summary

- Accessing subtitles is easier than validating subtitle quality.

- ASR errors can silently break sentiment, entity, and intent analysis.

- For production-grade datasets, run quality checks before model ingestion.

As the developer of YTVidHub, one of the most common questions we get is: "Do you support languages other than English?"The answer is yes.

Our batch YouTube subtitle downloader can access all available subtitle files provided by YouTube, including Spanish, German, Japanese, and Mandarin Chinese.

But download availability is not equal to dataset reliability. For researchers and analysts, quality inside the file is often the primary bottleneck.

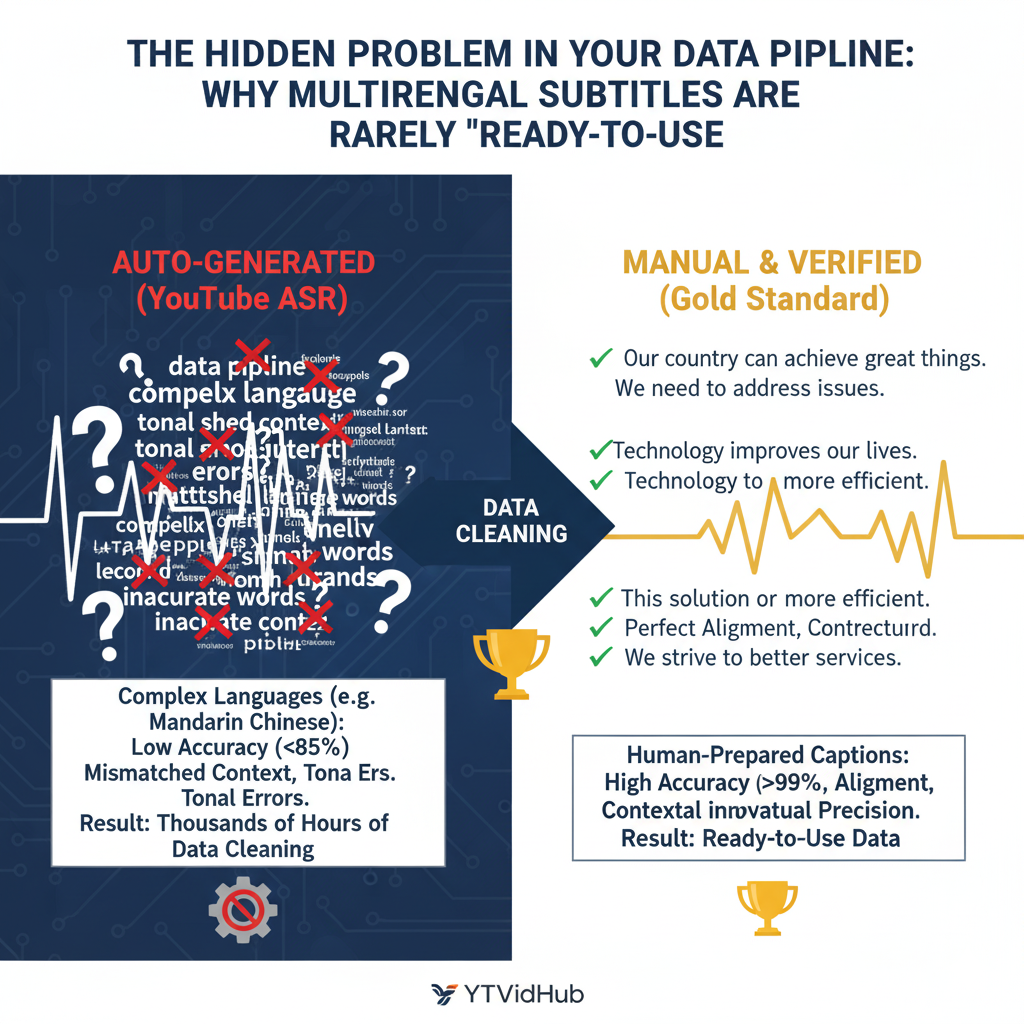

Three Data Quality Tiers

Analysis quality depends on identifying the subtitle tier before ingestion.

Tier 1: Reliable Gold Standard

Manually uploaded captions prepared by creators. These are typically the strongest source for model training and research.

Tier 2: Unstable ASR Source

YouTube automatic speech recognition works well in some cases, but quality often degrades in niche, multilingual, or high-speed audio.

Tier 3: Error Multiplier

Auto-translated captions inherit ASR noise and then add translation artifacts. Avoid this tier for high-stakes work.

Keyword Focus: Subtitle Accuracy in Multilingual Pipelines

This page targets practical queries like subtitle accuracy, YouTube subtitle errors, and multilingual caption quality. Search intent is not just informational. Most readers are trying to decide whether a dataset is safe for model training, analytics, or public publishing.

To satisfy that intent, we separate source types and failure modes: manual captions, auto-generated ASR captions, and auto-translated captions. This structure helps teams estimate downstream risk before they invest in chunking, embedding, or retrieval pipelines.

The practical takeaway is simple: subtitle access is a logistics problem, subtitle accuracy is a quality-control problem. Treat them as separate gates in your workflow and your downstream model outputs become far more reliable.

Pre-Ingestion Quality Audit Checklist

- Sample 3-5 transcript segments against raw audio context.

- Measure named entity correctness on domain-specific terms.

- Flag non-speech noise ratio before sending to NLP pipelines.

- Mark low-confidence language tracks for manual review.

The Real Cost of Cleaning

Time saved in bulk download can be lost in post-cleaning if quality controls are skipped.

1. SRT Formatting Noise

SRT is optimized for playback, not analytics:

- • Timecode fragments (00:00:03 --> 00:00:06)

- • Sentence breaks split by timing windows

- • Non-speech tags like [Music] or (Laughter)

2. Garbage In, Garbage Out

Inaccurate transcripts produce inaccurate analytics. If core entities are misrecognized, your downstream sentiment, topic, and retrieval results can fail.

Language-Specific Risk Patterns

Subtitle failures differ by language and channel format. Tonal languages often suffer from character substitution when audio quality drops. Fast dialogue channels increase segmentation errors, and domain-heavy videos introduce vocabulary mismatches that look fluent but change meaning.

Because of this, a single global accuracy estimate is not enough. Track quality by language, source type, and domain context. A stream acceptable for light content review may still be unsafe for model training, sentiment analysis, or entity extraction pipelines.

The practical takeaway: separate access metrics from quality metrics. Fast download success does not guarantee reliable semantic output.

Remediation Loop for Better Subtitle Quality

- 1) Detect: sample representative transcript windows per language and channel class.

- 2) Classify: split formatting noise issues from true semantic errors.

- 3) Correct: apply glossary cleanup and manual review where quality gains are significant.

- 4) Validate: compare corrected output with source audio for high-risk segments.

- 5) Monitor: track quality drift and refresh thresholds as content mix changes.

Validation Notes

- Findings are based on repeated multilingual subtitle export and cleaning workflows.

- Quality tiers separate source reliability for practical data decisions.

- Limitations are explicit so teams can avoid hidden accuracy debt.

Frequently Asked Questions

Why are auto-generated subtitles often inaccurate in some languages?

ASR quality varies by language coverage, speaking speed, accent diversity, and domain vocabulary. Accuracy drops sharply in low-resource or tonal languages.

Are auto-translated subtitles suitable for research datasets?

Usually not. Auto-translation compounds upstream ASR errors, so quality checks and manual review are required before production use.

Related Reading

Building a Solution for Usable Data

We solve access first. Next, we continue improving accuracy and ready-to-use formats.

We are developing a Pro service for near human-level transcription. Meanwhile, try our playlist subtitle downloader for bulk processing.

Join Mailing List for Updates